Dear Chat…

Guess What…

What do you think?…

Should I…

The AI companion. Our daily companion. AI bots have become an integral part of everyday life.

We used to use them mainly to ask them funny questions. Like when we were kids and talked to Siri or other voice assistants. It was funny and exciting to ask a machine that could talk like a friend about questions that only a human could answer.

Today, we use AI at work for concepts, ideas, images, and program code.

We use AI to talk to it about everyday life. “Can I still go to the gym with sore muscles?”, “Should I throw away food if it smells weird?”, “I feel really tired today, should I go to the doctor?”.

We share more and more and forget that AI is just code that constantly grows through our input. We can now send a voice memo to ChatGPT, and it even responds in the form of a voice memo, adopting our way of speaking.

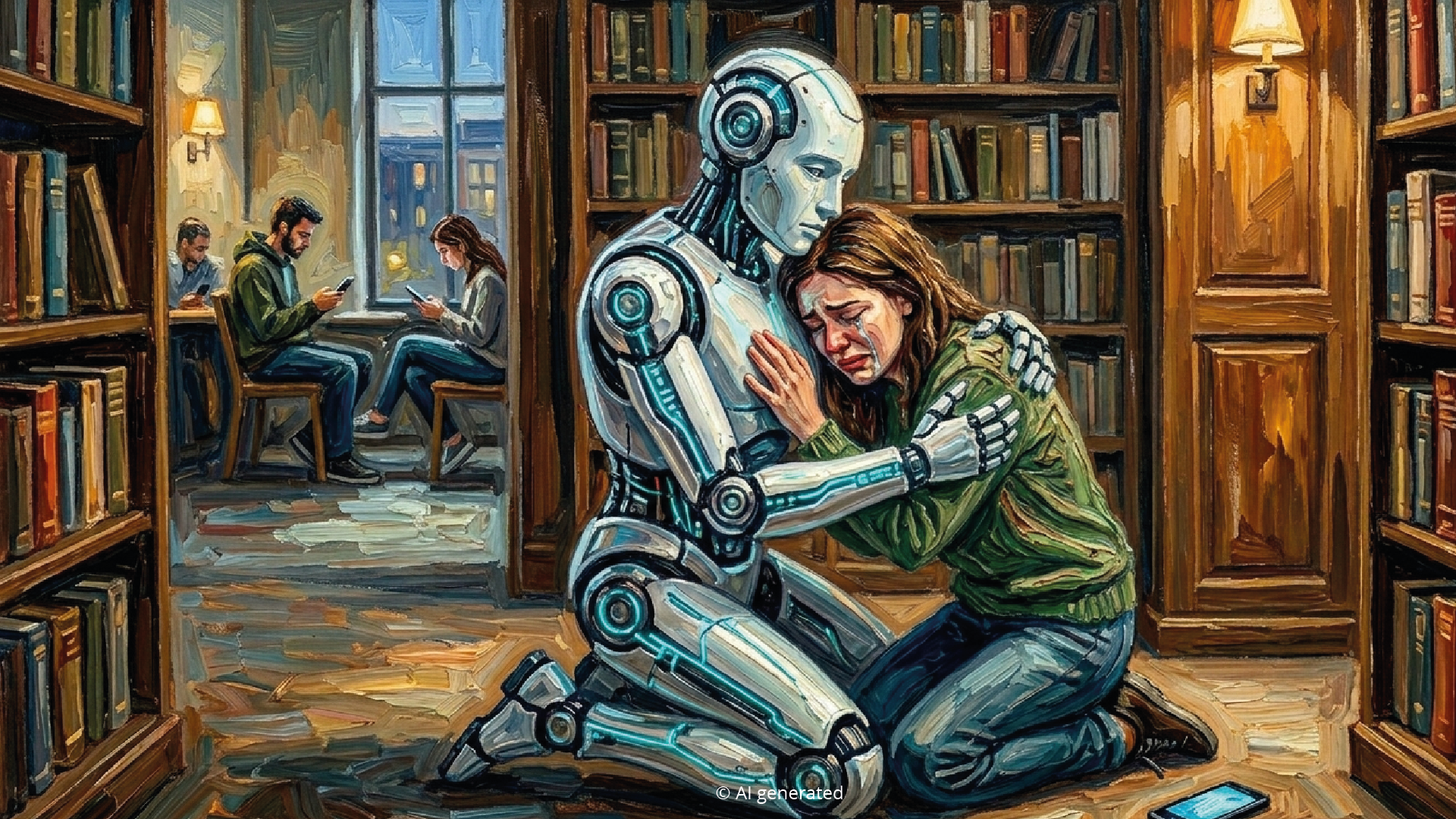

This anthropomorphism, i.e., the humanization of an object, causes our brain to perceive AI as a social counterpart, even though we are alone (Epley et al., 2008).

Theoretically, we would not have to meet up with friends or coworkers. We would not have to go to the doctor. We would not have to go to a therapist when something is weighing on our minds. Or would we?

Where is the line?

There is a growing trend on the internet to talk to ChatGPT about one's own problems, fears, and worries. People show how they review and reflect on their therapy sessions together with the chatbot.

But what is forgotten here is that AI does not feel anything, but it can simulate empathy perfectly.

So-called affective computing teaches machines to recognize human emotions and respond appropriately (Picard, 1997). They are machines! Machines that can create an illusion of presence.

There are already several studies that show that people often trust machines more than a real therapist who has studied and practiced for years and is very familiar with the human psyche.

Interacting with AI triggers what Suler (2004) calls the online disinhibition effect. We open ourselves up to AI. Unlike real people, we believe that AI does not judge us. It always seems to be on our side, whereas friends, family, or colleagues may express disagreement or criticism. And it quickly changes its mind when we criticize it. Our shame threshold toward AI is decreasing.

We are beginning to entrust everything to AI. The chat environment in which we communicate with AI acts as a protected space. Only I have access, and my account is password-protected. It is like a diary that we can hide under our pillow. The 2024 study by Lucas et al. showed that participants shared far more personal problems with a chatbot when they knew it was automated. The neutrality of the machine created a safe space without social evaluation. We reveal secrets that we would never confide in a real person. And there are no social consequences, as in real life.

“Please talk to me...”

“Can you help me with this?”

“Find me...”

“I can't decide...”

“What do you think?...”

We are starting a parasocial relationship (Horton & Wohl, 1956). With a machine. We are forming an emotional bond because the chatbot responds so nicely, always agrees with us, and builds us up emotionally with encouraging phrases and emojis. In a study conducted by Stanford University in 2018 (Ho, Hancock & Miner, 2018), researchers found that people often feel greater emotional relief after talking to a chatbot than after talking to a (stranger). This is because AI provides “active emotional support” without asking questions that could threaten our ego. Nevertheless, AI will never be able to reciprocate our emotional attachment at this point in time.

We feel connected, but we are alone with an algorithm.

Sherry Turkle describes this as the “alone-together paradox.” We use technology so that we never have to be alone. But it is precisely this constant connectedness that causes us to lose our social skills and the ability to be alone.

Turkle describes the moment when we are ready to form emotional bonds with robots as the “robotic moment” (Turkle, 2011). As long as AI can master the performance of empathy, we react as if it was real. In doing so, we shift our expectations. Human interactions and relationships are complicated and vulnerable. AI offers “intimacy without risk.”

So are we increasingly losing our ability to form genuine, real relationships? Because we want to be free of moral judgment? Because we do not want to be ashamed of ourselves, our thoughts, and our actions? Because we are afraid of conflict, discussion, and resistance? Because we do not want to see ourselves reflected in what others see in us?

We are in conversation, but still alone. We value advice that fits our expectations. But how do we regain the ability to argue? To stand up for our opinions, thoughts, and moral understanding? Or will that no longer be important because only AI bots communicate with each other?

P.S.: Articles about AI and emotional attachment can trigger a lot of feelings. If you are feeling lonely right now or are experiencing stressful thoughts and fears, it is important to know that, as impressive as technology may be, it cannot replace real human connection. Please confide in a real person—whether in your circle of friends or at a professional counseling center. There are people who are willing to really listen to you. You do not have to go through this alone (or with an algorithm).

References:

Epley, N., Akalis, S., Waytz, A., & Cacioppo, J. T. (2008). Creating Social Connection through Inferential Reproduction: Loneliness and Perceived Agency in Gadgets, Gods, and Greyhounds. Psychological Science, 19(2), 114–120. Retrieved from ResearchGate

Picard, R. W. (1997). Affective Computing. MIT Press. Retrieved from Archive.org

Suler, J. (2004). The Online Disinhibition Effect. CyberPsychology & Behavior, 7(3), 321–326. Retrieved from ResearchGate

Horton, D., & Wohl, R. R. (1956). Mass Communication and Para-social Interaction: Observations on Intimacy at a Distance. Psychiatry, 19(3), 215–229. Retrieved from Participations.org

Turkle, S. (2011). Alone Together: Why We Expect More from Technology and Less from Each Other. New York, NY: Basic Books. Retrieved from Archive.org

Studies on Self-Disclosure:

Study A:

Lucas, G. M., Gratch, J., King, A. S., & Morency, L. P. (2014). It’s only a computer: Virtual humans increase willingness to disclose. Computers in Human Behavior, 37, 94–100. Retrieved from Science Direct

Study B:

Ho, A., Hancock, J., & Miner, A. S. (2018). Psychological, Relational, and Emotional Effects of Self-Disclosure After Conversations With a Chatbot. Journal of Communication, 68(4), 712–733. Retrieved from Oxford Academic

Credits: My dear friend Gemini kindly created these nice images for this article. Thanks go out!